Uncooked and steadily unlabeled knowledge could be retrieved and arranged utilizing illustration studying. The flexibility of the mannequin to develop a superb illustration depends upon the amount, high quality, and variety of the information. In doing so, the mannequin mirrors the information’s inherent collective intelligence. The output is straight proportional to the enter. Unsurprisingly, the best visible illustration studying algorithms these days depend upon large real-world datasets. Actual knowledge accumulating, in the meantime, has its personal set of challenges. Gathering huge quantities of unfiltered knowledge is possible since it’s not costly. Including uncurated knowledge has much less influence at giant knowledge scales, indicating poor scaling conduct for self-supervised illustration studying utilizing this strategy. Gathering curated knowledge on a smaller scale can be potential, though fashions educated utilizing this methodology can solely deal with very particular jobs.

To scale back the monetary burden, new analysis by Google Analysis and MIT CSAIL investigates whether or not large-scale curated datasets that may prepare state-of-the-art visible representations could also be achieved utilizing artificial knowledge derived from commercially accessible generative fashions. Studying from fashions describes this strategy, which differs from studying straight from knowledge. The crew takes benefit of the brand new controls supplied by fashions’ latent variables, conditioning variables, and hyperparameters to curate knowledge within the proposed methodology, one of many quite a few advantages of utilizing fashions as an information supply for setting up large-scale coaching units. As a result of fashions are much less cumbersome than knowledge, they’re simpler to retailer and share. Furthermore, fashions can generate countless knowledge samples, albeit with restricted variability.

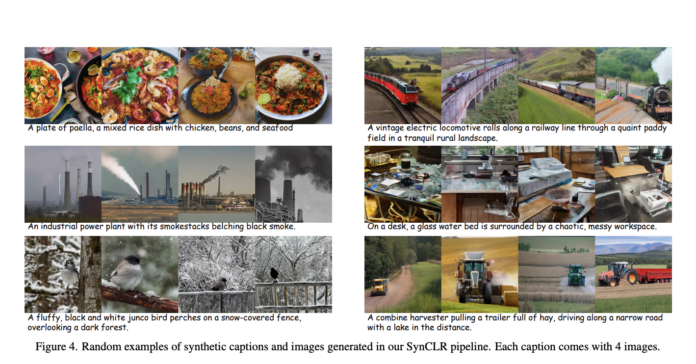

On this research, the researchers rethink the extent of element in visible courses by utilizing generative fashions. As an example, contemplate the 4 photos of the next instructions: “A cute golden retriever sits in a home made from sushi” and “A golden retriever, carrying sun shades and a seashore hat, rides a motorbike.” By separating the embeddings for numerous photos with out explicitly contemplating the identical semantics, conventional self-supervised strategies like SimCLR will deal with every picture as a separate class. But, supervised studying algorithms (like SupCE) will deal with all of those photos as belonging to the identical class (like “golden retriever”).

Since accumulating a number of photos described by a given caption is non-trivial, significantly when scaling up the variety of captions, this degree of granularity is difficult to mine in actual knowledge. Alternatively, this functionality is intrinsic to text-to-image diffusion fashions; with the identical caption as a coaching set and ranging noise inputs, these fashions can generate many photos that precisely match the caption.

The work’s findings present that in comparison with SimCLR and supervised coaching, the granularity on the caption degree is superior. The truth that this visible class description is definitely extensible is an extra perk. On-line class (or knowledge) augmentation permits hypothetically scaling as much as limitless courses, not like ImageNet-1k/21k, the place a set variety of courses is used. There are three levels to the proposed system:

- Synthesizing an enormous assortment of image captions is the preliminary stage. Utilizing word-to-caption translation examples, the crew has developed a scalable methodology that takes benefit of the in-context studying capability of enormous language fashions (LLMs).

- The subsequent step is to create many manmade photos and captions utilizing a text-to-image diffusion mannequin. A dataset of 600 million pictures is generated on this means.

- Lastly, they prepare fashions for visible representations utilizing masked picture modeling and multi-positive contrastive studying.

The researchers evaluate OpenAI’s CLIP relating to top-1 linear probing accuracy on ImageNet-1K with the ViT-B mannequin at 80.7% and the ViT-L mannequin at 83.0%, each educated with SynCLR pre-training. On fine-grained classification duties, SynCLR achieves outcomes similar to these of DINO v2 fashions derived from a pre-trained ViT-g mannequin, surpassing CLIP for ViT-B by 3.3% and ViT-L by 1.5%. Relating to semantic segmentation on ADE20k, SynCLR beats MAE pre-trained on ImageNet by 6.2 and 4.1 in mIoU for ViT-B and ViT-L, respectively, in the identical setup. This demonstrates that SynCLR has a powerful capability to switch to dense prediction duties, very similar to DINO v2, which additionally requires coaching on photos with a decision of 518×518—one thing that SynCLR doesn’t possess.

The crew highlights that there are a number of methods to enhance caption units. For instance, they use extra subtle LLMs, enhance the pattern ratios amongst distinct ideas, and develop the library of in-context examples. A technique to enhance the educational course of is so as to add a high-resolution coaching section or an intermediate IN-21k fine-tuning stage after extracting data from a much bigger mannequin. Additionally they counsel that at the side of SwiGLU and LayerScale integration, higher mannequin initialization procedures can result in architectural advantages. Nonetheless, they counsel these areas for future analysis due to restricted assets and the restrictions of this paper, which didn’t intention to realize the very best potential metrics.

Try the Paper and Github. All credit score for this analysis goes to the researchers of this venture. Additionally, don’t neglect to hitch our 35k+ ML SubReddit, 41k+ Fb Group, Discord Channel, LinkedIn Group, Twitter, and E mail Publication, the place we share the newest AI analysis information, cool AI initiatives, and extra.

In case you like our work, you’ll love our publication..

Dhanshree Shenwai is a Laptop Science Engineer and has a superb expertise in FinTech firms masking Monetary, Playing cards & Funds and Banking area with eager curiosity in functions of AI. She is passionate about exploring new applied sciences and developments in right now’s evolving world making everybody’s life straightforward.